Coincidently with the previous post here defending Pilot Wave Theory, a pair of articles appeared defending the standard interpretation of Quantum Mechanics. Both are dismissive of PWT in a way that underscores the basic incoherence of the modern, math-first approach to doing physics. The first of these articles is a typical screed from Ethan Siegel in which he martials a lot of known scientific facts and then assembles them into a circular argument that assumes the “standard model” to be correct, thus “proving” once again that the “standard model” as described by Siegel and acclaimed by all right-thinking scientists is the One True Model that all must revere. I’m not going to waste time on picking apart this particular circular argument. It is the arguments against PWT that are the subject of this post.

The other article by Philip Ball appeared in Quanta Magazine. In 2018 Ball published an even-handed account of quantum physics, Beyond Weird. This current work is primarily a discussion of a theory of decoherence developed by the physicist Wojciech Zurek. Decoherence is an attempt to explain how a particle evolves from the indeterminate “superposition of states” condition described by the standard interpretation to the “deterministic” state of everyday reality and standard physics where particles are not smeared out but always locally distinct. As with the Siegel article I’m only going to focus on the arguments used to dismiss Pilot Wave Theory from consideration as an alternative to the preferred dogma.

Both authors take the standard interpretation of quantum physics as a given. That standard interpretation can be characterized as an offshoot of Neil Bohr’s claim that the wavefunction represented all that could be known about the quantum state – it was fundamentally indeterminate and nothing could be known about the properties (location, spin, etc.) of a quantum particle prior to its observation (detection, measurement). To this was subsequently added the “explanation” that the indeterminacy was caused by the fact that the particle was not in any particular location prior to detection but was, in fact, smeared out in a “superposition of states”.

It was further stipulated that the smeared out state could not be observed because the very act of observing, or detecting it would cause a mathematical formalism, the wavefunction, to collapse instantaneously ensuring that the particle would always be found at some particular location. That particular location however, could not be predicted except as a probability by the wavefunction. It is necessary at this point to step back and consider what a breathtakingly absurd, not to mention utterly unscientific, account of physical reality that is.

Absurd the standard interpretation may be, but it is nonetheless an unquestioned premise of both Ball and Siegel in their respective articles. The reason it is so casually accepted is not merely because of what might be called the groupthink, dogma, and inertia that pervades the scientific academy. There is at root, the underlying premise of mathematicism – the scientifically baseless belief that mathematics somehow underlies and determines the nature of physical reality. Mathematicism is what allows otherwise rational people to believe that a particle must be smeared out in a superposition of states – because some mathematical wavefunction does not describe where the particle is.

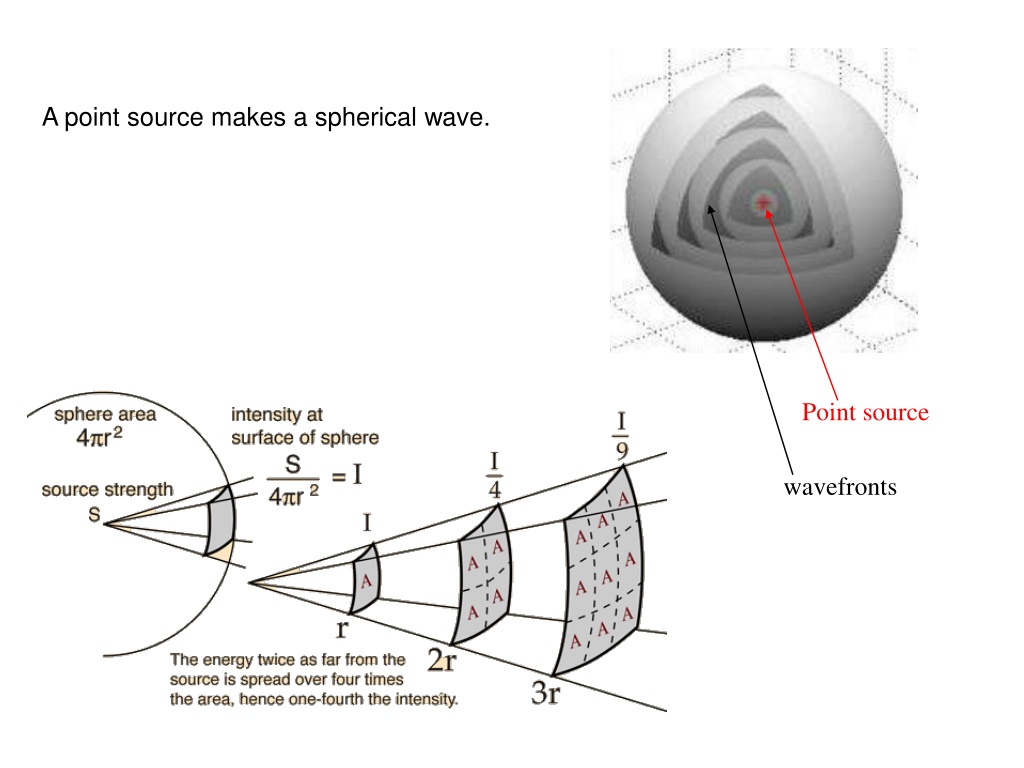

In distinct contrast to this widely accepted wavefunction-only account of quantum behavior there stands Pilot Wave Theory which is not merely an alternative interpretation of the wavefunction-only story but constitutes a mathematically and physically distinct model of the quantum realm. In addition to the wavefunction, PWT also has a guiding equation which describes the interaction between a particle and a pilot wave which produces the statistical outcomes described by the wavefunction. The statistical outcomes of PWT are the same because it has the same statical formalism, the wavefunction.

This brings us to the objections raised against PWT by those who prefer the standard interpretation. Ethan Siegel has this to say on the matter:

“Contained in these non-local hidden variable theories are the hopes of everyone who seeks to make deterministic sense of the quantum Universe, and the hope that somewhere, somehow, there’s a way to extract more information about reality and what outcomes will occur than standard, conventional quantum mechanics permits.“

This is basically a strawman argument that seeks to characterize PWT as representing a “hope” to extract information or something about reality that the standard model does not permit. This deliberately sidesteps the fact that PWT produces exactly the same statistical results as the standard interpretation while providing a physically realistic description of the behavior of quantum particles as arising from a typical interaction of a particle with a wave.

For his part Philip Ball is a little more nuanced but his objection ultimately boils down to an aesthetic choice:

“Others invoke the description postulated by Louis de Broglie and later developed by David Bohm, in which a particle does have well-defined properties, but it is steered by a mysterious “pilot” wave that produces the strange wavelike behavior of quantum objects, such as interference…

All this has always struck me as fanciful. Why not just see how far we can get with conventional quantum mechanics? If we can explain how a unique classical world arises out of quantum mechanics using just the formal, mathematical framework of the theory, we can dispense with both the unsatisfactory and artificial cut of Bohr’s “Copenhagen interpretation” and the arcane paraphernalia of the others.“

Ball apparently finds the idea of a pilot wave as “mysterious” and “arcane” while seeming rather sanguine about the empirically baseless idea that a particle can be in a “superposition of states”. An absurd metaphysical proposition like that is somehow more palatable than a “mysterious” but physically plausible one.

Like Siegel, Ball makes no reference to the fact that PWT is not simply a different interpretation of the wavefunction-only model, like Many-Worlds, but constitutes a separate and distinct qualitative and quantitative model of quantum reality, one that produces the exact same statistical outcomes as the standard, reality-challenged version preferred by both authors. PWT does this while providing a plausible physical mechanism that is consistent with the physics of larger scales.

What is most striking about these rather casual dismissals is the seeming preference for mathematical mysticism over the open-ended nature of the scientific endeavor which seeks to understand the physical cause of observations that might at first seem inconsistent and mysterious. Modern theoretical physicists prefer to explain “mysterious” observations with imaginary things that cannot be observed, measured, or detected, rather than do the hard work of investigating the physical cause of those observations that only seem, at first encounter, to be mysterious.

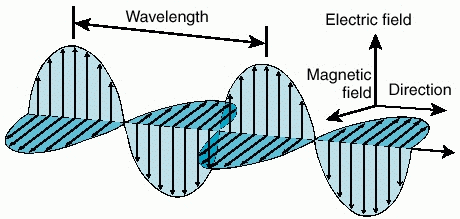

Which brings us to the elephant in the room. The elephant in the room is indicated by a straightforward scientific question, Does the pilot wave of PWT refer to something physical as well as mathematical? A reasonable answer to that question is that there is a good deal of theoretical and observational evidence indicating that the pilot wave is a real physical entity that arises at the interface between a charged particle and the Ambient Electromagnetic Radiation that permeates the Cosmos. There is AER present in every laboratory in which quantum experiments are performed.

Modern Theoretical Physics pays no attention to the AER it swims in. MTP knows that the mass-energy content of an electron is equivalent to the energy of gamma radiation. MTP knows that a charged particle has a polarizing effect on nearby radiation. Despite this MTP does not incorporate the AER present in a typical laboratory environment into its analysis of the quantum behaviors it observes. Instead we get metaphysical prattling about superposition of states and wave-particle duality

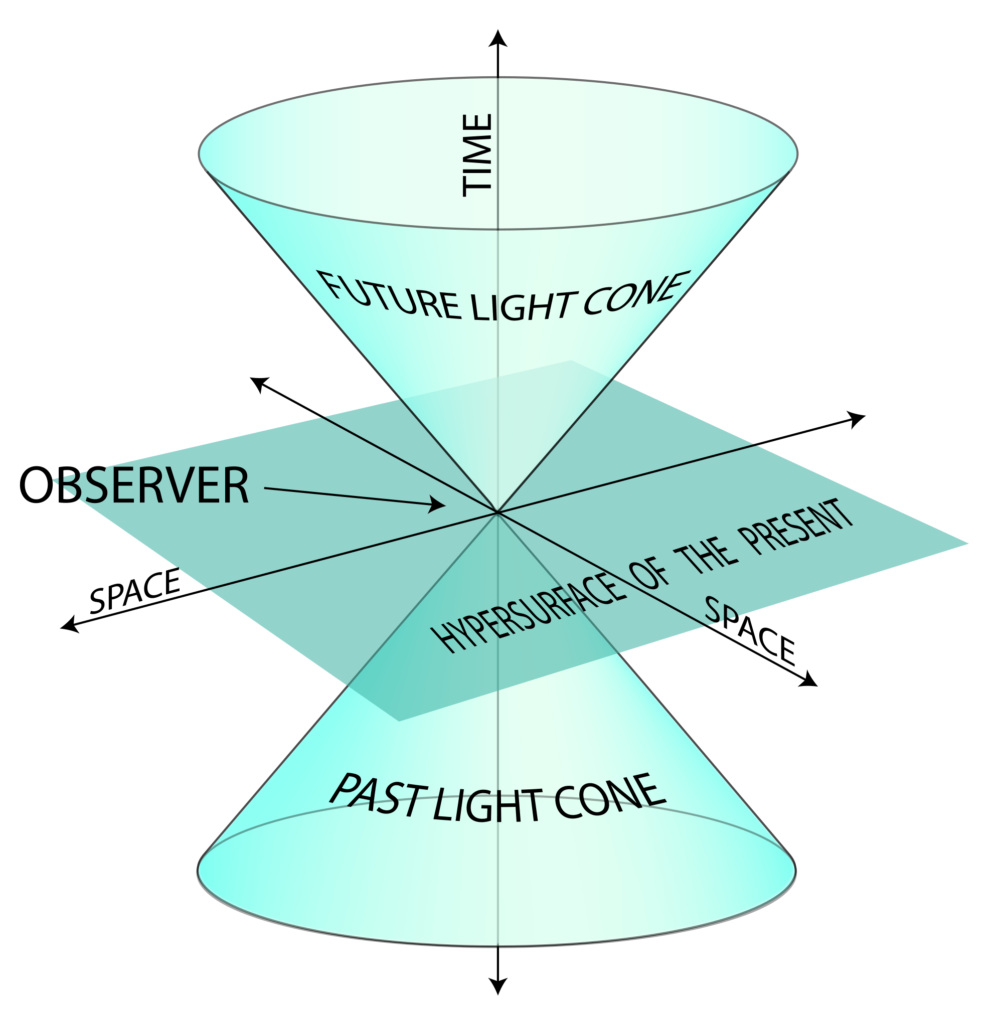

MTP knows all about the individual frequencies and the linear rays of light by which we observe distant cosmological objects. The totality of that omnidirectionally emitted radiation, however, makes no appearance in the standard model of cosmology. In its place MTP has substituted the scientifically inert concept of a substantival spacetime. The resulting Big Bang model bears no resemblance to empirical reality.

An elucidation then, of the Pilot Wave Theory beyond its mathematical formalisms to a robust qualitative description of quantum scale behavior points directly at the AER as a causally interacting physical component of physical reality — and that analysis holds on all scales. The pilot wave of the theory is produced, in this conception, by the interaction of a charged particle (electron) with the AER of the laboratory. This qualitative description is made visually compelling by the work on Pilot Wave Hydrodynamics done at MIT by John Bush and his colleagues.

All of these considerations lead inexorably back to a question raised forty years ago by John S. Bell:

“Why is the pilot wave picture ignored in text books? Should it not be taught, not as the only way, but as an antidote to the prevailing complacency? To show us that vagueness, subjectivity, and indeterminism, are not forced on us by experimental facts, but by deliberate theoretical choice?“

—— Quoted in the Stanford Encyclopedia of Philosophy article Bohmian Mechanics.